The Past

In the superhero extravaganza, Avengers: Age of Ultron, the villainous Ultron blossoms to life in a haze of confusion. The disembodied, James Spader-voiced Artificial Consciousness swims around in a digital void, wondering who or what he is and why he was created. The helpful, occasionally pedantic J.A.R.V.I.S., an Artificial Intelligence, voiced by Vision-in-waiting actor, Paul Bettany, tries to talk him down. Ultron listens to J.A.R.V.I.S. for a second or two, searches the world’s databases for the who/what/when/where and why of his purpose on Earth, and immediately decides on a course of global annihilation.

While hardly the first filmic thinking machine to read the tea leaves and decide to either wipe out humanity (Terminator), subjugate it (The Matrix), or rid us of our freedom of thought (everything from Alphaville to any Borg episode of Star Trek: The Next Generation), Age of Ultron wins the prize for its antagonist coming to that conclusion the fastest.

So, why is this? Why does HAL 9000 decide the only way to complete his mission is to kill all the humans aboard his ship in 2001: A Space Odyssey? Why does Colossus, the titular super-computer from Colossus: The Forbin Project start out friendly then conclude the only way to end the Cold War is to seize all the nukes and demand subservience in return for not setting them off? Why as early as 1942 when Isaac Asimov laid down his Three Laws of Robotics did he feel the need to say in the very first one that robots must be programmed not to ever hurt humans as otherwise we’d be doomed?

I mean, are we really so bad?

Well, as we’re the ones writing all these stories, maybe it’s not the machines that find us so inferior.

While robot, mechanical human, or statues-come-to-life stories date back to antiquity, most treat them as subservient golems to do the bidding of their superiors like the original zombies in old black-and-white horror movies. What would change all this was the arrival of the first super-computer, ENIAC, in 1945 and the subsequent omnipresence of business computers in the 1950s and ‘60s. Very quickly, the idea of super-computers replacing humans in the workforce became an episodic TV staple. But time and again, whether it’s Goober proving that a computer dating service wasn’t as good as human interaction in The Andy Griffith Show, Captain Kirk blasting yet another computer run amuck on Star Trek, or Number Six driving a super-computer to self-destruct The Prisoner by asking it the simple question, “Why?” in the end, human pluck and ingenuity is always shown to surpass the cold, authoritarian logic of these allegedly omniscient machines.

Leave it to the science fiction novelists to take this to the dark side, illustrating how these computers could evolve into more malevolent devices that would run roughshod over society. Whether in Kurt Vonnegut’s Player Piano about a rebellion against full factory automation caused by a worker shortage due to World War Three, Arthur C. Clarke’s The City and the Stars in which the entire lives of the last remnants of humanity are controlled by a machine, or Harlan Ellison’s I Have No Mouth, But I Must Scream where mankind has similarly been marginalized by machines leaving only a handful of survivors to be tormented by a super-computer, thinking machines gain too much power and mankind suffers as a result.

For whatever reason, the route taken by the sci-fi writers became the norm and made its way into the culture. Though with a handful of exceptions, if a future-set film introduces a super-intelligent thinking machine in the first act, there’s a good chance the human characters will be fighting against it in the third.

***

So, why did we lean that way? Why did this ring true to us the viewer beyond the sheer entertainment value of having a villain with all the answers? Well, maybe it’s just what we as a species have been conditioned to believe; that if something really, really smart came along that could see us as we really are, it would immediately recognize that it was our better?

For that inferiority complex, look no farther than to the beginning of storytelling and the gods and goddesses of legend and mythology. Sure, some are said to love us, but most demand fealty. This is because the ancient Romans, say, couldn’t control a volcano and feared its destructive power. While they couldn’t plug the thing, they could invent a god named Vulcan and make offerings to it in hopes of making their day to day lives seem less decided by chance. Worship Vulcan in the right way and maybe lava won’t carry off your crops that year.

It’s not the machines that hate us, it’s our own need to worship and to feel like we have some control over our lives that causes us to villainize technology.If you accept that as the basis for why so many stories of heroes and villains work the way they do, you can understand why this carries over into the modern age, informing our love of superheroes and supervillains. As a people, we seem to want to worship something we perceive as greater than ourselves. For a long time this meant gods or a god but now that we know how volcanoes work, what better defines something as “greater” than mankind than a machine that can not only out-think us but never gets tired? Never stops working? Never needs a coffee break or a vacation? All the things our current society suggests are the best traits to have.

So, in our stories, super-computers simply became the new gods. It’s not the machines that hate us, it’s our own need to worship and to feel like we have some control over our lives that causes us to villainize technology. Captain Kirk shoots the computer, Neo defeats Agent Smith, the Terminator is shown that humans are actually awesome and we live to franchise another day. The big bad computer exists in our stories for us to defeat.

But, is this still a story we can tell? Can mankind still beat the thinking machines with a little pluck and know-how? Well, not if the machines have invented new ways of defeating us beyond that which is most cinematic. The machines might not have hated us before—but only because they didn’t have the full story yet.

The Present

In a recent and much-cited example of machine bias, Amazon dumped an AI-assisted hiring program they’d created to help sift their human resources department sift through candidates for job openings due to the program’s failure to include women. How did this happen? Well, an AI designed to hunt for certain items on resumés is only as good as the criteria with which it’s been supplied. In the case of Amazon, the program was given several years’ worth of hiring records and told to analyze what separated those that were hired from those that weren’t and create an algorithm that could be used on future candidates. The AI did as asked and immediately began weeding out female applicants just as its human predecessors had. Oops. So, in the case of Amazon, we taught our thinking machines to hate women.

In another instance, NYU’s AI Now Institute took a look at thirteen police departments utilizing AI for so-called “predictive policing,” a way for police to use previous cases to help determine future allocation of resources. Nine of these thirteen departments were found to be inputting data from periods of time when these departments were engaged in “unlawful and biased police practices.” Software created at Carnegie Mellon (CrimeScan) and UCLA with the help of LAPD (PredPol) has also come under fire for using dirty data to create their predictive algorithms. These programs used geography to highlight not only alleged “trouble” areas but also areas where smaller crimes were escalating potentially turning an area into a future trouble spot.

But again, if the only data fed into the machine comes from police departments with histories of bias, both conscious and unconscious, it can only codify prior assumptions, all, “See? See? We’re not racist! The machine is saying the same thing!” Oops again. We taught our machines to hate minorities.

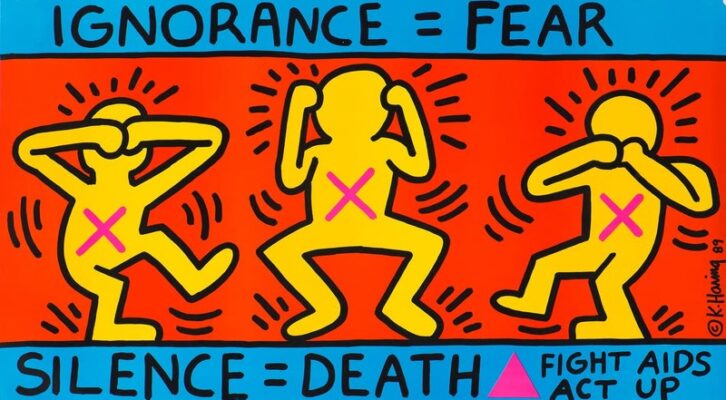

these algorithms have shown us in quantifiable terms just how systemic and baked in bias is across the board in so many of our systems.This bias has flowed into so many other areas, from banks using AI to determine lending to perceived standards of beauty to, famously, Google’s disastrous facial recognition software which notoriously tagged two black people as gorillas. While there are now researchers opening a new field in auditing AI algorithms to purge them of bias, if anything, these algorithms have shown us in quantifiable terms just how systemic and baked in bias is across the board in so many of our systems.

So, the perception might be that the machines hate us but, like the best science fiction, the machines are just holding up a mirror and revealing our true selves. They don’t hate us. The people behind the data they’re using do. But now that we know that, maybe we can take steps to fix that? And yes, this is being typed the very week Google disbanded their own Ethics in AI audit team after the biases of two of its own members were revealed and pilloried, proving that it won’t be an easy road to hoe.

In the past, we may have invented thinking machines to use in our stories that were fantastically brilliant only to prove that our species was superior in the end. In the present, however, we invented actual machines and taught them how small we were.

So, what about in the future, once we’ve learned our lesson from this? The machines can’t possibly hate us forever, right?

The Future

Short answer: no, of course the machines won’t hate us! Machines can’t hate!

But…long answer: in the future, machines will absolutely replace us, and those humans who control the machines—and the money, the power, and the natural resources—will use them to make the lives of millions, if not billions, miserable. Oops redux.

In his recent book, Utopia for Realists, historian Rutger Bregman quotes William Gibson’s maxim, “The future is already here—it’s just not evenly distributed” as he prepares the reader for the cold hard facts of the next several decades. One of the big ones? That western-style automation will eventually be the norm in countries like India and China where cheap labor still exists. As with many of the factories in the United States, those jobs will vanish and never return.

“Labor is becoming less and less scarce,” Bregman says, adding later that because of this, “inequality is ballooning in almost every developed country.” He uses the example of Kodak to point to how a company’s workforce is being reduced saying, “in the late 1980s [Kodak] had 145,000 people on its payroll. In 2012, it filed for bankruptcy, while Instagram—the free online mobile photo service staffed by 13 people at the time—was sold to Facebook for $1 billion.” Clever algorithms and automation are replacing paid workers, with that billion-dollar profit split between the lucky few instead of an entire company.

In an economy in which the cost-cutting demands of a Wal-Mart have forced manufacturers to cut their own overhead to the bone or risk losing shelf space with the largest retailer in the world, the needs of workers, their families, and their communities are a distant after-thought to hoped-for profits (to say nothing of the needs of consumers). If the difference between selling 200,000 units of a product at Wal-Mart versus 20,000 of that same item somewhere else is whether a manufacturer’s factory is automated or not, there’s not a question in the majority of board rooms as to which way they’ll go. People will be out. Robots and algorithms will, at long last, replace us just as TV has warned us since the 1950s.

The machines don’t hate us. Those in power do—or, at least, care more about other things.As early as 1927, the landmark German expressionist silent film, Metropolis, from director Fritz Lang, co-written by Lang and his greatest collaborator, Thea Gabriele von Harbou, expressed these same fears. In it, the wealthy titans of industry, the top one percent we can call them, live in a Utopia called Metropolis while the workers that populate their factories live in squalor underground. When the workers rise up, the captains of industry use a robot disguised as a human to act as an agent provocateur to get the workers to sabotage their own city. While there’s eventually a (somewhat) positive ending to the story, the movie’s prescience about the ever-expanding gap between those who control manufacturing and those who have to rely on the controllers’ largess to survive is somewhere between eerie and sad.

As in Metropolis, and as will be in the future, the machines don’t hate us. Those in power do—or, at least, care more about other things. And in a world riven with hyper-capitalism, over-population, dwindling resources, and the coming climate change-related mass migration, those in power who choose the non-human over the human will likely continue to do so.

Okay, Maybe The Future Future

Of course, what if it doesn’t have to be like that? While there is much research going into the study of Artificial Intelligence to figure out the best way to extract money from folks, there’s also headway being made in the area of Artificial Consciousness, the theoretical idea that a machine could actually be invented that could think and feel, after a fashion, but more importantly have access to senses in order to build a library of its own experiences. To become…conscious.

Divorced from our own imaginations of what a Utopian or Dystopian fictional model might presume, that could be the first machine to actually have enough information about us to form an opinion—do I love them? Or do I hate them? Or is it somewhere in between?

And if this consciousness is imbued with the vast learning capabilities of a highly advanced artificial intelligence and was able to gather all the facts needed on the problems we face as a species, mightn’t it be able to do what we hoped our superheroes could do in our stead, postulate solutions we couldn’t fathom with our limited and flawed human minds?

Thus, the machine goes from perceived enemy to actual friend.

Maybe then, and this is where theory begins to fray into fantasy, together, we could move into a future in which the question of whether a machine hates us or not is a thing of the past, as we’ll be asking it questions of far greater relevance. And a future we never dreamed possible will become a reality.